The Test Pyramid Doesn't Survive Contact with an LLM

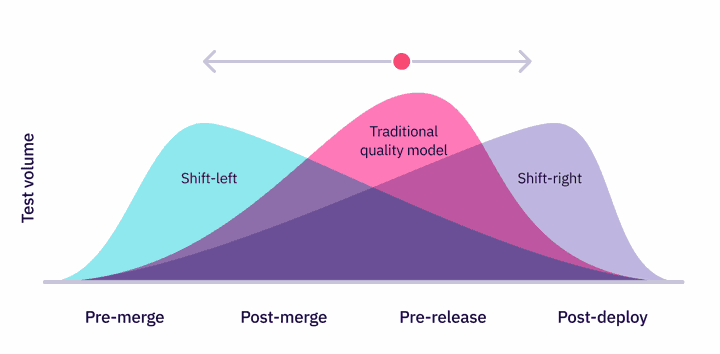

Every QE engineer has the same picture in their head when they hear the word "testing." A pyramid. A wide base of unit tests, a thinner band of integration tests, a small layer of end-to-end checks at the top. Mike Cohn popularized it in his 2009 book Succeeding with Agile. We have been arguing about its proportions ever since, but the shape itself has held up for nearly two decades because the systems we tested were deterministic. The same input produced the same output. A bug was a thing you could reproduce, ticket, and fix.

That world is ending faster than most QE teams realize.

Stanford's 2026 AI Index, released in April, reports that AI agents jumped from roughly 12 percent accuracy to 66.3 percent on OSWorld, a benchmark that asks them to complete real computer tasks across operating systems. That is within six points of human performance, in a single year. METR, an independent evaluation lab, has been tracking how long a task an AI can complete with 50 percent reliability, and they have found that this "task length horizon" doubles roughly every seven months, with the post-2023 doubling time accelerating to about 131 days.

A system that can autonomously do an hour of human work today is, on METR's own published projection, a system that can plausibly do week-long tasks in the next two to four years. The traditional test pyramid was not designed to test that kind of system. It was designed to test the calculator app, the e-commerce checkout, the API endpoint. When the thing you are testing can write its own code, browse the web, talk to other agents, and produce different output for the same input, the pyramid does not so much collapse as become irrelevant.

Three things that break

Reproducibility breaks first. A unit test assumes that add(2, 3) returns 5 every time. An LLM call given the same prompt at temperature zero will often return the same output, but raise the temperature, swap the model version, change a single token in the system prompt, and you are testing something different. "Flaky test" in traditional QE was a problem to solve. In AI testing, flakiness is the baseline.

Coverage breaks next. A traditional test plan asks: Which input classes have we covered? Equivalence partitioning, boundary value analysis, and decision tables. The input space of a chatbot is the set of all strings, in any language, in any tone, with any embedded image, document, or hidden instruction. Microsoft's April 2025 taxonomy of failure modes in agentic AI lists memory poisoning as "particularly insidious" precisely because the input space includes the agent's own past conversations. You cannot enumerate that.

Pass and fail break last, and most painfully. The Stanford RegLab study by Dahl and colleagues, published in the Journal of Legal Analysis, measured hallucination rates of 69 to 88 percent when leading LLMs were asked specific verifiable questions about random federal court cases. Their study did not flag those answers as failed unit tests. Most of the answers looked plausible. They cited cases that did not exist. The output was wrong, but it was not a crash, an exception, or a failed assertion. It was a fluent paragraph of fiction. The binary pass/fail of traditional testing has no slot for "confidently wrong."

The AI Quality Stack

The replacement is not another pyramid. It is a stack of five layers plus a cross-cutting band of governance and incident response. Each layer catches different failures. None of them is sufficient on its own.

Layer 1 is the Data and System Design Foundation. It is the part most teams skip in the diagram and pay for in production. The training data behind whatever model you are calling. The labeled examples in your eval set. The retrieval corpus your RAG pipeline pulls from. The system prompt that frames every conversation. The architecture decisions about which model and which tools you wired together. In my experience, around eighty percent of agent failures in production trace back to something in this layer rather than to a missing test. Bad retrieval. An unclear system prompt. A tool description the model misread. The eval suite did its job. The foundation was wrong.

Layer 2 is Offline Evaluations. This is where most QE engineers will start, because it looks the most like what they already do. You build a labeled dataset, you run the model against it, you measure a pass rate. The difference is that "pass" is now graded, often by another model acting as a judge, and that judge has its own reliability problems. Capability evaluations and dangerous-capability assessments, the kind METR runs to estimate how long an autonomous task an agent can complete, also live here, and they are a separate measurement discipline from regression-style eval suites.

Layer 3 is Adversarial Testing and Red Team. This is closer to security testing than to QA, but the skill set is recognizable. You are trying to make the system do something it should not do. Anthropic's January 2025 Constitutional Classifiers paper documented over 3,000 hours of red teaming with 183 active participants, looking for a single universal jailbreak. None was found. That number gives you a sense of how much time mature AI quality work involves at this layer.

Layer 4 is Runtime Guardrails. Input classifiers that block obviously malicious prompts. Output filters that catch policy violations before the user sees them. Refusal training. Tool sandboxing. Human-in-the-loop escalation paths for high-risk actions. These are not tests, they are defenses. They belong in the quality picture because they are how you survive the failures the earlier layers did not catch.

Layer 5 is Production Observability. Traces of every LLM call. Drift detection on input distributions. Hallucination scoring on real traffic. Cost and latency monitoring per model and per user. Continuous user feedback. This is the layer that catches what the offline evals missed, which will always be most of it. The ratio of issues found in production to issues found in pre-deployment evals is, in my experience, much higher than in traditional software.

And running across all five layers is a cross-cutting band of Governance, Documentation, and Incident Response. Model cards. Eval reports. Rollback procedures. Versioned prompts. Who pages when the agent posts something illegal at three in the morning. Traditional QE has these in different names: release notes, runbooks, on-call rotations. The team that ships an agent without them discovers the gap during their first real incident.

What this means for QE engineers

If you are in QE today, the honest read is that your unit-test instincts will not transfer cleanly, but your testing instincts will. The disciplines that matter most in AI quality, designing labeled datasets, thinking adversarially, reasoning about coverage of an unbounded input space, separating signal from noise in flaky measurements, are exactly what good QE engineers already do. The frameworks change. Pytest becomes Inspect or DeepEval. Test plans become eval harnesses. Bug reports become trace analyses. The mental model of "I am the last line of defense between the developer and the user" stays.

The pyramid was a useful picture for nearly two decades. The thing replacing it is messier, less settled, and harder to draw. That is also why the next few years are going to be the most interesting time to be in this field that we have seen since the move from waterfall to agile.

The honest read on the AI Quality Stack is that no single team is doing all six layers well in 2026. Most are strong in one or two and patchy across the rest. If you are stepping into this field from QE, the practical advice is to pick one layer and get genuinely good at it before moving to the next. Layer 2 (offline evaluations) is the easiest entry point. Layer 1 (the foundation) is the highest leverage. Layer 3 (red team) is where the most public credibility lives. The right choice depends on what you want to be known for.

Sources

Stanford HAI, 2026 AI Index Report, April 2026: https://hai.stanford.edu/ai-index/2026-ai-index-report

METR, Measuring AI Ability to Complete Long Tasks, March 2025: https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/

METR, Time Horizon 1.1, January 2026: https://metr.org/blog/2026-1-29-time-horizon-1-1/

Sharma et al., Constitutional Classifiers: Defending against Universal Jailbreaks across Thousands of Hours of Red Teaming, January 2025: https://arxiv.org/abs/2501.18837

Anthropic, Constitutional Classifiers research overview: https://www.anthropic.com/research/constitutional-classifiers

Dahl, Magesh, Suzgun, Ho, Large Legal Fictions: Profiling Legal Hallucinations in Large Language Models, Journal of Legal Analysis, 2024: https://reglab.stanford.edu/publications/hlarge-legal-fictions-profiling-legal-hallucinations-in-large-language-models/

Microsoft, Taxonomy of Failure Modes in Agentic AI Systems, April 2025: https://www.microsoft.com/en-us/security/blog/2025/04/24/new-whitepaper-outlines-the-taxonomy-of-failure-modes-in-ai-agents/